What Are the Best Internet Options for Bad Weather?

- Introduction to Weather and Internet Connectivity

- Types of Weather Conditions and Their Effects

- Impact of Rain on Internet Connectivity

- Snow and Ice: A Chilling Effect on Connectivity

- Wind and Storms: The Force of Nature on Connectivity

- Extreme Temperatures and Connectivity Challenges

- Preparedness and Mitigation Strategies

- Future Trends: Weather-Resilient Technologies

- Conclusion: The Crucial Link Between Weather and Connectivity

Introduction to Weather and Internet Connectivity

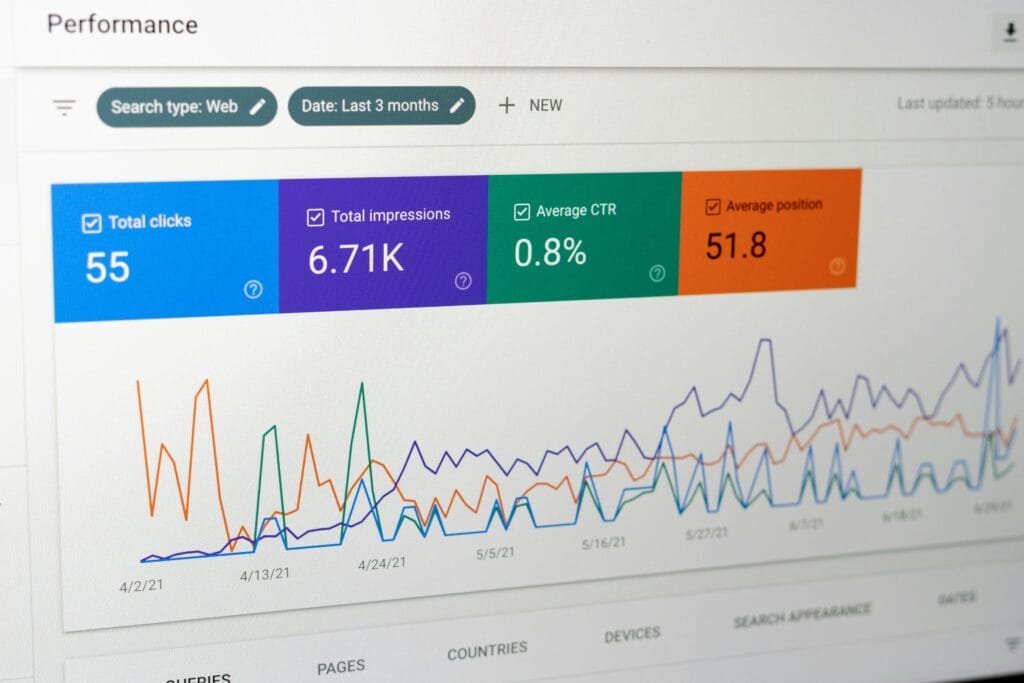

In the modern era, internet connectivity has become an essential utility, relied upon by individuals and businesses alike. Various technologies facilitate connectivity, including broadband, satellite, and cellular networks. Each of these methods has unique operational traits and characteristics that influence how users experience the internet. Understanding these technologies is crucial for recognizing the impact weather can have on connectivity.

Broadband, primarily delivered via fiber-optic cables, provides high-speed internet access to homes and businesses. Its performance is typically stable under typical conditions; however, extreme weather events such as heavy rain or snow can disrupt service. These disruptions could occur due to physical damage to lines or infrastructure, which can ultimately lead to temporary outages.

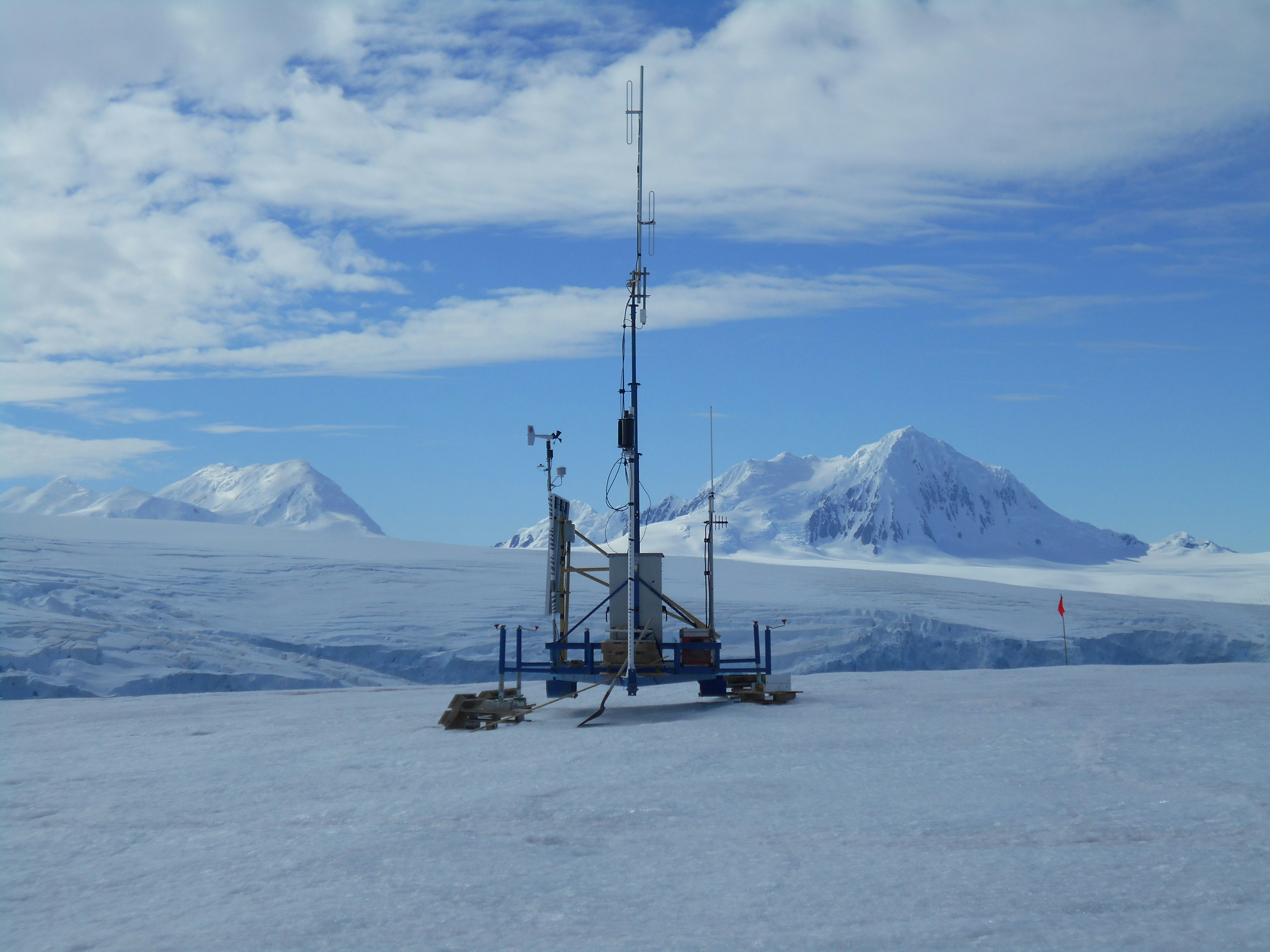

Satellite networks, on the other hand, offer internet access across vast and remote areas. These systems are particularly sensitive to weather conditions. For example, heavy rain, referred to as ‘rain fade’, can weaken the signals transmitted between satellites and ground-based receivers, resulting in slower internet speeds and increased latency. This phenomenon highlights the reliance of satellite internet on clear weather conditions to maintain optimal performance.

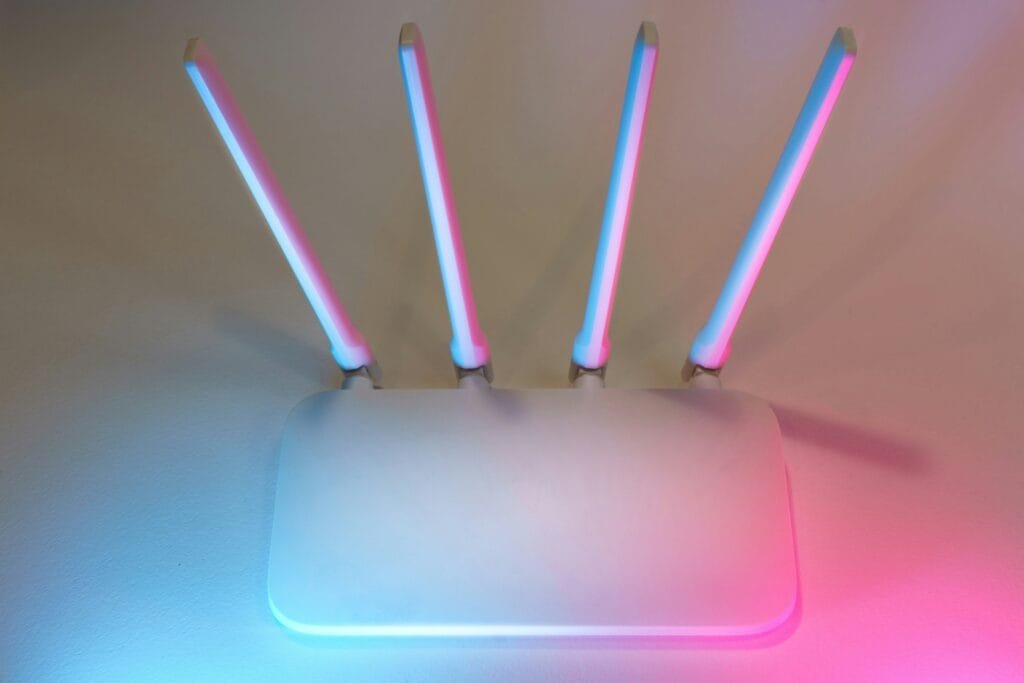

Cellular networks operate on a different premise, utilizing a series of towers to establish connections with user devices. Weather events, particularly storms and high winds, can adversely affect the infrastructure, potentially leading to service interruptions. Additionally, atmospheric conditions such as fog and severe thunderstorms can impact signal propagation, causing fluctuations in internet quality.

By delving into the intricacies of each technology, we begin to appreciate how environmental factors like weather can significantly impact internet connectivity. Understanding these relationships lays the groundwork for discussing specific instances and broader trends regarding weather’s effect on the performance of internet networks.

Types of Weather Conditions and Their Effects

Weather conditions can have a significant impact on internet connectivity, influencing both the quality and reliability of service across various systems. Understanding how these environmental factors interact with digital infrastructure is essential for users and providers alike.

One of the most common weather conditions affecting connectivity is rain. Rain can interfere with microwave signals used in point-to-point wireless communications, leading to signal attenuation. Furthermore, heavy rainfall can cause physical damage to exposed equipment, such as cables and antennas, which may negatively impact connectivity. Even moderate rain can lead to reduced internet speeds due to these signal losses.

Snow presents its own set of challenges. Accumulations of snow can obstruct satellite dishes and antennas, causing interruptions in service. Additionally, snow-heavy conditions can complicate the maintenance and repair of telecommunications infrastructure, increasing downtime. The additional weight of snow on cables can lead to sagging and, in severe cases, breakage, which can severely disrupt service.

Wind is another factor that plays a critical role in internet connectivity. High winds can damage physical internet infrastructure, including transmitting towers and cable lines. Wind-induced movements can knock cables loose or damage connecting equipment, resulting in increased latency or disconnection. Moreover, wind can cause issues related to airborne debris, leading to further disruptions in internet connectivity.

Lastly, extreme temperatures—both hot and cold—can affect internet hardware. High temperatures may lead to overheating of equipment, while extreme cold can cause brittle plastic components to crack. Both scenarios can lead to interruptions in service. Consequently, understanding how each of these weather conditions affects internet connectivity is crucial for improving service reliability and customer satisfaction.

Impact of Rain on Internet Connectivity

Rainfall is a notable environmental factor that can significantly influence internet connectivity. Specifically, it tends to affect satellite and wireless internet connections more severely than wired options. One of the main issues is the phenomenon termed ‘rain fade.’ This effect occurs when raindrops scatter or absorb radio frequency signals, leading to a degradation in quality and reliability. For satellite internet, which operates using high-frequency microwave signals, even a light rain can cause these signals to weaken, resulting in slower speeds and increased latency.

Research has shown that heavy rain events can lead to significant service interruptions. For instance, during a day of torrential downpours, users of satellite internet often report total connectivity losses, leaving them unable to access the web for extended periods. Such outages can be particularly damaging for those who rely on these connections for work or remote access to critical systems. In broader urban settings, where infrastructure is often more dependable, the direct impacts of rain are less pronounced. However, in rural areas, where satellite connections are commonplace, disruptions caused by rain can create substantial inconveniences.

Furthermore, wireless internet signals are also susceptible to rain-induced disruptions. As water droplets in the atmosphere scatter the signals transmitted from tower to user, the signal strength diminishes, resulting in buffering or a complete loss of connectivity. Providers often encourage users to ensure that their equipment, such as antennas or routers, are appropriately positioned to minimize obstruction and optimize signal reliability, particularly during rainy weather.

In conclusion, the impact of rain on internet connectivity is profound, especially for satellite and wireless connections. Understanding the technicalities behind phenomena like rain fade is crucial for users in affected areas, enabling them to manage expectations and seek alternative solutions during adverse weather conditions.

Snow and Ice: A Chilling Effect on Connectivity

Snow and ice are among the environmental factors that can significantly impact internet connectivity. These weather phenomenons not only create immediate disruptions but can also affect the long-term integrity of the physical infrastructure that supports internet services. One primary concern is the accumulation of snow and ice on cables, antennas, and other equipment essential for internet delivery.

The weight of heavy snow can lead to downed power lines, which directly affects broadband services reliant on these lines for energy. Additionally, snow accumulation on ground-level broadband infrastructure, such as fiber optic cables, can create significant service interruptions. This is particularly common in regions where snowfall is routine. Under such conditions, broadband services can become unavailable for extended periods, undermining communication and business operations.

Furthermore, ice storms pose an additional challenge. Ice can create a layer of freezing precipitation that coats cables and satellite dishes, leading to increased weight and, in some instances, complete failure of these systems. When satellite dishes are covered in ice, the signal strength is considerably diminished, resulting in service interruptions for users relying on satellite internet. The problem is further exacerbated by the fact that ice tends to persist longer than snow, prolonging any necessary maintenance and repairs.

In rural areas, where services might already be less robust, heavy snow and ice can be especially detrimental. Service providers may experience delays due to hazardous road conditions, preventing technicians from reaching affected sites promptly. This cascading effect of environmental factors highlights the vulnerabilities present within our internet infrastructure, necessitating greater awareness and preparedness for weather-related disruptions. Ultimately, understanding how snow and ice impact internet connectivity is crucial for consumers and service providers alike, lending to better strategies for resilience against these chilling effects.

Wind and Storms: The Force of Nature on Connectivity

High winds and severe storms pose significant threats to internet connectivity, disrupting service through physical damage to infrastructure. Telecommunications lines, which support internet networks, are particularly vulnerable to the destructive forces of nature. Wind gusts exceeding certain speeds can topple utility poles, bringing down power lines and, consequently, the internet services reliant on them. Moreover, storms can cause trees and debris to fall on cables, leading to widespread outages.

Case studies illustrate the severe consequences that storms can have on internet connectivity. For instance, Hurricane Sandy in 2012 ravaged the eastern United States, leading to extensive infrastructure damage. Thousands of homes and businesses experienced internet service disruptions, primarily due to downed fiber optic cables and damaged equipment. The recovery process required considerable time and resources, underscoring the fragility of internet services in the face of severe weather. Similarly, the windstorms in Europe in 2019 resulted in significant internet outages as high winds uprooted trees, severing cables crucial for connectivity.

In addition to tangible damage, the cascading effects of wind and storms can lead to longer-term connectivity issues. Even after immediate repairs are made, the cumulative impact of multiple outages can result in degraded service quality, leading to persistent disruptions for consumers. ISPs often face challenges not only in restoring service but also in enhancing their infrastructure to withstand future severe weather events effectively. This necessitates ongoing investment and adaptation strategies in the telecommunications sector.

Understanding how wind and storms impact internet connectivity highlights the importance of resilient infrastructure. As severe weather patterns become more common due to climate change, the need for robust systems capable of withstanding such natural forces is paramount. Telecommunications companies must prioritize the protection and reinforcement of their networks to ensure reliable internet access amid increasingly unpredictable weather patterns.

Extreme Temperatures and Connectivity Challenges

Extreme temperatures, whether high or low, significantly impact internet connectivity and service performance. The primary way in which temperature affects connectivity is through the thermal expansion and contraction of materials utilized in networking equipment and cabling. For instance, in regions experiencing intense heat, cable insulation can become brittle, leading to increased susceptibility to physical stress and potential damage. This degradation can cause disruptions in the signal quality transmitted through fiber optic or copper cables, ultimately affecting overall internet performance.

In addition to physical damage, high temperatures can also hinder the performance of network devices, including routers, switches, and servers. These devices are designed to operate within specific temperature ranges; when exposed to excessive heat, they may experience throttling to prevent overheating. This throttling can manifest as slower internet speeds, increased latency, and even potential outages. Similarly, during the colder months, network equipment can face issues such as condensation, which may result in short circuits or other electrical failures, leading to service interruptions.

The impact of extreme cold is not limited to the devices themselves but also extends to the cabling infrastructure. Cable materials may contract, leading to increased tension and potential breaks if improperly installed. Areas prone to freezing temperatures may require specialized insulation or additional protective measures to ensure the integrity of network connections. Such environmental considerations are essential for maintaining reliable internet service, particularly for users in regions with dramatic seasonal fluctuations.

Therefore, understanding how extreme temperatures affect internet connectivity is crucial for both consumers and service providers. By acknowledging these environmental challenges, proactive measures can be implemented to enhance the resilience and reliability of internet services, ensuring consistent performance despite weather fluctuations.

Preparedness and Mitigation Strategies

In an era where internet connectivity has become essential for both personal and professional endeavors, mitigating the impact of inclement weather on service reliability is of paramount importance. Internet Service Providers (ISPs) and consumers alike can implement a range of preparedness strategies to address potential connectivity issues during adverse weather conditions.

One of the primary strategies for consumers is to invest in backup systems. A reliable backup internet connection—such as a mobile hotspot or a secondary ISP—can provide a vital lifeline during outages caused by storms or extreme weather events. ISPs can also offer bundled services that include backup options to ensure their customers remain connected, even when primary systems fail. Such redundancy can significantly lessen the inconvenience experienced during ISP outages.

This redundancy is further enhanced through community approaches. Collaborating with local governments and organizations can help create frameworks that prioritize and innovate solutions for maintaining connectivity during significant weather events. For instance, developing community mesh networks can provide an alternative means of communication when traditional ISPs are compromised, fostering a stronger resilience to environmental challenges.

Additionally, ISPs may consider investing in infrastructure improvements, including burying power lines and upgrading hardware to withstand harsh weather conditions. These measures not only ensure continuous service during adverse conditions but also contribute to the overall robustness of the internet as a utility.

Furthermore, consumers should also be educated about the potential impacts of weather on internet service. Awareness campaigns and resources from ISPs can empower users to prepare in advance, ensuring they have the tools and knowledge to react quickly when connectivity issues arise. Ultimately, a proactive approach to preparedness can greatly alleviate the challenges posed by weather-related connectivity disruptions.

Future Trends: Weather-Resilient Technologies

The ever-evolving nature of technology continues to offer promising solutions that bolster internet connectivity in the face of challenging weather conditions. As adverse weather phenomena become more frequent due to climate change, the importance of adopting weather-resilient technologies has come to the forefront. Innovations in fiber optics, satellite communication, and infrastructure development are paving the way for enhanced resilience in internet connectivity.

Advancements in fiber optic technology are particularly noteworthy. With the ability to transmit data at extremely high speeds and over long distances, fiber optics can withstand harsh weather conditions better than traditional copper cables. Moreover, newer materials and construction methods are being developed to produce fiber cabling that is even more durable against extreme temperatures, moisture, and physical impacts. Such improvements serve to maintain connectivity even during devastating storms.

In the realm of satellite technology, ongoing developments aim to enhance connectivity resilience. Low Earth Orbit (LEO) satellite systems, for instance, are being launched to provide faster, more reliable internet services to remote locations that might be vulnerable to terrestrial weather disruptions. Unlike traditional geostationary satellites, LEO satellites have lower latency and can offer more robust connections during adverse weather conditions.

Another area of focus is the implementation of weather-proofing techniques in network infrastructure. This includes designing and constructing equipment enclosures that can protect vital components from rain, snow, and extreme temperatures. Moreover, innovations like self-healing networks, which automatically reroute data during disruptions, help ensure maximum uptime.

Finally, smart infrastructure plays a critical role in weather-resilient technologies. Integrated systems that monitor environmental conditions can optimize network performance and preemptively react to forecasted weather events. The fusion of these technological advancements represents a significant step towards securing internet connectivity against the multitude of challenges posed by adverse weather.

Conclusion: The Crucial Link Between Weather and Connectivity

The relationship between weather and internet connectivity is a complex yet significant one. Throughout the discussion, it has become clear that various weather phenomena, such as heavy rain, wind, snow, and extreme temperatures, can substantially affect the performance and reliability of internet services. These environmental factors can lead to disruptions in signal transmission, latency issues, and even complete service outages, depending on the infrastructure in place.

Additionally, different types of internet connections react unpredictably to weather conditions. For example, satellite internet can be susceptible to signal blockage during storms, while fiber-optic connections might be less affected under certain conditions but still face challenges with physical damage during severe weather events. Therefore, understanding the nuances of how weather can impact connectivity is crucial for individuals and businesses that rely heavily on stable internet service.

This awareness not only helps consumers make informed decisions when selecting internet services but also sets the stage for evaluating potential solutions to mitigate service interruptions due to adverse weather. By factoring in local climate conditions and historical weather patterns, users can better prepare for connectivity challenges while seeking out providers that offer more resilient options. Consequently, as digital connectivity continues to play an invaluable role in both personal and professional spheres, recognizing the interplay between weather and internet service is essential for ensuring consistent access to the resources we rely on.

Ultimately, understanding the impact of environmental conditions on internet connectivity empowers users to anticipate service issues and advocate for more robust service options. It is imperative to stay informed and proactive about how weather can influence connectivity, thereby minimizing disruptions in our increasingly digital lives.