How to Choose the Right VoIP Solution for Your Needs?

- Introduction to VoIP Technology

- Understanding the Essential Components

- Choosing the Right Tech Stack

- Designing the User Interface

- Implementing Core Functionality

- Handling Quality of Service (QoS)

- Integrating with Other Services

- Testing and Debugging the VoIP Feature

- Deployment and Scalability Considerations

Introduction to VoIP Technology

Voice over Internet Protocol, commonly known as VoIP, signifies a transformative advancement in the realm of communication technology. Rather than relying on traditional circuit-switched telephone networks, VoIP utilizes packet-switched protocols to transmit voice data via the internet. This fundamental shift allows users to make voice calls through their internet connection, significantly improving the efficiency and flexibility of voice communication.

The importance of VoIP technology in modern communications cannot be overstated. Its benefits are manifold and contribute to its increasing adoption among both businesses and consumers. Firstly, VoIP can drastically reduce the costs associated with traditional phone services. Long-distance calls, which typically incur hefty charges on conventional systems, can be made at minimal or no additional cost. Thus, it promotes cost-effective communication and can be particularly advantageous for businesses with a remote workforce or those engaged in international operations.

Moreover, VoIP offers remarkable flexibility. Users can make and receive calls from various devices—smartphones, tablets, and computers—provided they have an internet connection. This adaptability suits the modern lifestyle, where mobility and constant connectivity are paramount. Additionally, VoIP systems often come equipped with features such as voicemail to email, call forwarding, and video conferencing that enrich the communication experience.

As VoIP technology continues to evolve, its applications are diversifying beyond merely voice calls. For instance, businesses utilize VoIP for collaborative tools, integrating video conferencing and messaging services into their communication systems. Additionally, the technology supports customer services through features like interactive voice response (IVR) systems. With these growing use cases, VoIP stands at the forefront of a digital communication revolution, making it essential for anyone looking to build a VoIP call feature to understand its core principles and applications.

Understanding the Essential Components

Implementing a Voice over Internet Protocol (VoIP) call feature entails several critical components that work cohesively to ensure effective communication. At the heart of VoIP is the Session Initiation Protocol (SIP), which is integral for establishing, maintaining, and terminating voice sessions. SIP functions as the signaling protocol that initiates the call by sending requests and receiving responses between clients and servers. This protocol is essential for determining how sessions are negotiated and managed across various networks, ensuring that calls are routed accurately.

Real-time Transport Protocol (RTP) follows SIP in importance, as it is responsible for the actual transfer of audio and video streams during the call. RTP encapsulates the data packet and then delivers it in a time-sensitive manner, which is pivotal for maintaining audio quality and minimizing delays. It works in conjunction with SIP to provide real-time communication capabilities, allowing for seamless interaction between parties.

Media servers also play a vital role in the VoIP infrastructure, acting as points of control for audio, video, and data streams. They facilitate call processing, conferencing capabilities, and can assist with transcoding to adjust different media formats for compatibility across devices. This is especially useful in scenarios where diverse codecs are employed to compress voice data; codecs convert the sound into a digital format for transfer, and their efficiency directly impacts the call’s quality.

Signaling servers enhance the functionality of SIP by managing session establishment and control. These servers handle incoming and outgoing signaling data crucial for maintaining stable connections. Moreover, to ensure robust performance and high quality of service (QoS), a strong infrastructure must support these components, including adequate bandwidth, reliable network connections, and redundancy measures to handle potential outages. By understanding these essential elements, developers can create a comprehensive VoIP call feature that meets user expectations for clarity and reliability.

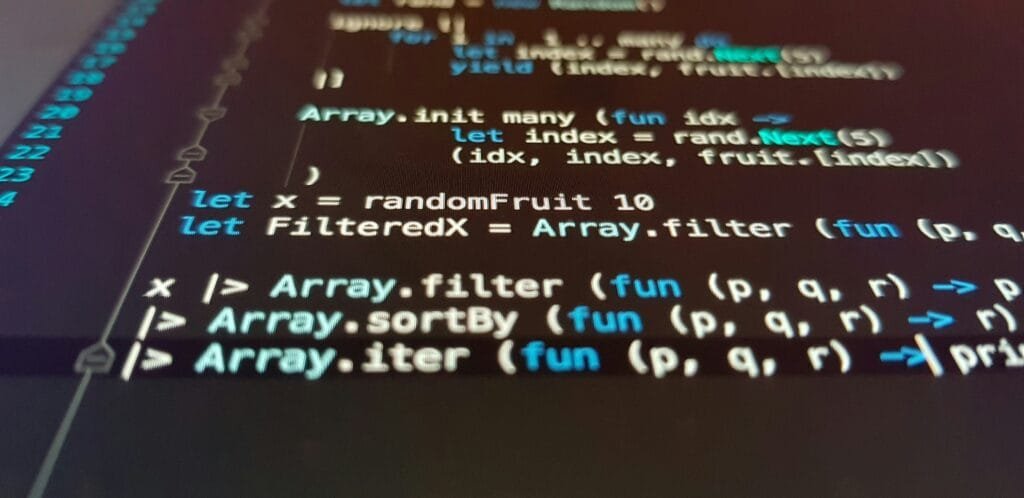

Choosing the Right Tech Stack

When embarking on the development of a VoIP call feature, selecting the appropriate tech stack is crucial to ensure robust functionality, scalability, and maintainability. The tech stack typically comprises various programming languages, frameworks, and tools tailored for both backend and frontend development. Here, we will examine several options that can help developers make informed decisions based on their project requirements.

For the backend, popular programming languages include Node.js, Python, and Java, each offering unique benefits. Node.js is particularly well-suited for handling asynchronous calls, making it ideal for real-time applications like VoIP. Its event-driven architecture allows for efficient translation of calls into data packets. Python, renowned for its clear syntax and extensive libraries, can facilitate rapid development, whereas Java promotes scalability and strong performance for larger applications. The choice often boils down to team familiarity and specific project needs.

On the frontend, frameworks such as React or Angular can enhance the user experience by creating responsive and interactive interfaces. React, with its component-based architecture, allows for reusable UI components, thereby speeding up development. Angular, on the other hand, provides an extensive set of tools and features which may be beneficial for larger teams focusing on complex projects. The integration of these frameworks with backend services is critical in delivering seamless VoIP functionality.

Additionally, developers must consider relevant libraries and APIs that can support VoIP functionalities. WebRTC, for instance, is a powerful open-source project designed to enable audio and video communication directly between web browsers. By leveraging such technologies, developers can implement features like real-time audio and video calls with minimal latency.

In selecting the right tech stack, it is vital to evaluate the specific project requirements and team capabilities. This strategic alignment will ultimately facilitate the creation of a successful VoIP call feature that meets user expectations.

Designing the User Interface

Creating an effective user interface (UI) for a VoIP call feature is crucial for promoting accessibility and enhancing user engagement. A well-designed interface not only simplifies the user experience but also contributes to the overall satisfaction of the users. When designing the UI, it is essential to consider several key elements that will facilitate a seamless experience.

First and foremost, the layout should prioritize intuitiveness. Users should be able to navigate the interface easily, with all necessary elements clearly visible. Important UI components include call buttons, contact lists, and call history displays. Placing standout call buttons in prominent locations ensures that initiating a call is straightforward and quick. Furthermore, implementing visual cues such as color coding or icons can guide users effectively through their tasks.

Accessibility is another critical consideration in UI design. Ensuring that the interface is navigable via keyboard shortcuts and compatible with screen readers can significantly improve usability for individuals with disabilities. Additionally, the text size should be adjustable, and high-contrast color schemes should be provided to accommodate users with visual impairments.

Responsiveness across various devices is equally important. The user interface should adapt seamlessly to different screen sizes, allowing users to experience the same level of functionality whether they are on a smartphone, tablet, or desktop. A responsive design can be achieved through the use of flexible grid layouts, which ensure that all UI elements remain appropriately scaled and positioned across different platforms.

Ultimately, the design of a VoIP call interface should focus on creating a user-friendly experience that enhances engagement. By adhering to best practices in UI design—ensuring intuitiveness, accessibility, and responsiveness—developers can create an effective tool that meets the needs of diverse users.

Implementing Core Functionality

The implementation of core functionality in a Voice over Internet Protocol (VoIP) call feature is crucial for ensuring seamless communication. This section will explore various essential components such as initiating and receiving calls, managing call sessions, answering calls, and terminating calls. Each of these functionalities can be implemented through a combination of signaling protocols and media handling techniques, ensuring a robust VoIP experience.

To initiate a call, developers typically employ the Session Initiation Protocol (SIP). A standard SIP invite message can be constructed as follows:

INVITE sip:recipient@example.com SIP/2.0Via: SIP/2.0/UDP sender.example.com:5060;branch=z9hG4bK776sgdjTo: From: ;tag=12345Call-ID: 123456789@sender.example.comCSeq: 1 INVITEContact: Content-Type: application/sdpContent-Length: 0

Once the call is initiated, the user will be alerted for incoming calls, which can be handled using similar SIP mechanisms to accept the call. Therefore, handling incoming calls involves setting up appropriate signaling to notify the recipient and then establishing a media session.

Managing call sessions is an integral part of the VoIP system that ensures all parts of the call stay synchronized. This can involve handling user states such as ringing, connected, and on-hold. Using state management patterns allows developers to easily transition between different states during a call.

Lastly, terminating a call involves sending a SIP BYE message to the other party, effectively informing them that the session will be closed:

BYE sip:recipient@example.com SIP/2.0CSeq: 2 BYE

By implementing these core functionalities, developers can create a robust VoIP call feature that provides an efficient and effective communication platform. Each step in the process contributes to the overall reliability and quality of the VoIP service.

Handling Quality of Service (QoS)

Ensuring high call quality in Voice over Internet Protocol (VoIP) communications involves addressing several challenges, including jitter, latency, and packet loss. These factors can significantly impact the user experience by causing interruptions, distortions, and delays in conversations. By implementing effective Quality of Service (QoS) strategies, developers and network administrators can manage and mitigate these issues, ultimately enhancing call performance.

Jitter refers to variations in packet arrival times that can lead to inconsistency in audio quality during calls. To tackle this, one of the most effective measures is to use jitter buffers, which temporarily store incoming packets and smooth out variations before playback. Setting an appropriate size for the jitter buffer is crucial; too small may cause delays, whereas too large can introduce latency. Monitoring jitter levels with QoS tools allows for proactive adjustments to network configurations to maintain optimal performance.

Latency, or the delay between speaking and hearing responses, is another critical factor in VoIP quality. It can result from network congestion, inefficient routing, or inadequate bandwidth. To minimize latency, it is advisable to prioritize VoIP traffic over less sensitive data through QoS protocols such as IP precedence or DiffServ. By classifying VoIP packets with higher priority, organizations can ensure that voice traffic is less affected by general network fluctuations.

Packet loss also poses significant challenges to maintaining a quality VoIP experience. Even small amounts of lost packets can lead to disruptions in conversation and affect overall call integrity. To combat this, it is essential to monitor the network continuously and investigate any discrepancies. Utilizing redundancy within the network infrastructure can also serve to create failover paths for VoIP traffic, ensuring continuity even under duress. Overall, these combined strategies to manage jitter, latency, and packet loss contribute to a superior user experience in VoIP communications.

Integrating with Other Services

Integrating VoIP (Voice over Internet Protocol) call features with other services can significantly enhance overall communication solutions. The synergy created by combining VoIP with various platforms—including SMS, video calls, and diverse communication APIs—allows users to enjoy a more robust and versatile experience. By leveraging these integrations, organizations can streamline their communication processes and improve operational efficiency.

One notable integration opportunity is combining VoIP with SMS functionalities. This allows users to send and receive text messages alongside voice calls, providing a seamless communication experience. Such an integration can be particularly beneficial in customer support scenarios, where users might want to switch between calling and texting without leaving the platform. This multipurpose functionality not only heightens user satisfaction but also fosters more effective communication between businesses and their clients.

Integrating video call capabilities is another way to enhance VoIP services. With the increasing demand for face-to-face interaction, adding video to a VoIP solution can greatly improve conversations, making them more personal and engaging. This feature is especially valuable for remote teams and telehealth services, where visual interaction helps in building rapport and clarity.

Furthermore, linking VoIP features with existing platforms, such as Customer Relationship Management (CRM) systems or customer support tools, can create an all-in-one solution that empowers users. For instance, a CRM integration can enable automatic logging of calls, allowing for better record-keeping and follow-up efficiency. This powerful connection between VoIP and other services ensures that businesses maintain comprehensive communication records while improving their productivity.

In conclusion, integrating VoIP with other essential services not only elevates the functionality but also creates a more unified communication experience. By embracing these integrations, organizations can optimize their workflows and ultimately deliver an enhanced experience for their users.

Testing and Debugging the VoIP Feature

Testing and debugging are crucial steps in developing a robust Voice over Internet Protocol (VoIP) call feature. Effective verification of functionality allows developers to identify issues early on and ensure that the feature operates smoothly under various conditions. There are several types of testing that should be conducted to achieve this goal: unit testing, integration testing, and user acceptance testing.

Unit testing involves evaluating individual components of the VoIP feature. This testing is essential to confirm that each function behaves as expected when isolated from the rest of the system. By using a framework such as JUnit or NUnit, developers can create automated tests that validate the logic of their code. This step is critical in quickly identifying and resolving issues before they grow into larger problems during later stages of development.

Integration testing follows unit testing and assesses how the VoIP feature interacts with other components of the system. This is particularly important for ensuring that the call feature effectively communicates with servers and endpoints without issues. Tools like Postman or SoapUI can help simulate different scenarios and provide insights into the performance of the VoIP application as a whole.

User acceptance testing (UAT) is the final phase before deployment, focusing on real-world use. In this stage, actual users validate whether the VoIP feature meets their expectations and requirements. Gathering feedback can highlight usability issues or reveal areas that require enhancement, ensuring the feature is fit for purpose.

In addition to formal testing, developers should implement monitoring and logging tools to troubleshoot common issues post-deployment. Implementing solutions like APM tools provides real-time insights into call quality and system performance, facilitating quick identification of potential problems. By applying these testing strategies, developers can enhance the reliability and user experience of their VoIP call feature.

Deployment and Scalability Considerations

When implementing a Voice over Internet Protocol (VoIP) call feature, deployment strategies and scalability are critical components to contemplate. One effective approach is leveraging cloud hosting options, such as Platform as a Service (PaaS) or Infrastructure as a Service (IaaS), which provide the necessary infrastructure and resources for seamless VoIP communications. Cloud hosting enhances accessibility, allowing users to connect from various devices while ensuring that the system remains versatile and adaptive to changes in user demand.

Load balancing is another essential element in the deployment of VoIP services. It helps distribute network traffic evenly across multiple servers, which is vital for maintaining performance levels as the number of concurrent users fluctuates. Implementing load balancers in conjunction with dynamic scaling solutions can help manage incoming connections, thereby reducing the risk of service degradation during peak usage times. Utilizing techniques like horizontal scaling, where additional server instances are added to handle increased load, can further enhance the system’s responsiveness and reliability.

Moreover, preparing for varying numbers of concurrent users is paramount. Providers need to analyze historical usage data and anticipate growth in user base to effectively allocate resources. By establishing thresholds and automated scaling alerts, organizations can proactively address increases in call volume without sacrificing quality. Additionally, real-time monitoring tools can be integrated into the VoIP system to track performance metrics and user engagement, ensuring timely interventions when performance drops.

To maintain optimal functionality during traffic spikes, best practices involve prioritizing traffic, deploying Quality of Service (QoS) protocols, and optimizing the network environment to support VoIP traffic. This guarantees that voice calls retain the necessary quality and reliability, even when the network experiences substantial load. Long-term maintenance strategies should include regular software updates, ongoing performance evaluations, and infrastructure audits to sustain the overall health of the VoIP service, fostering its continuous development and scalability over time.